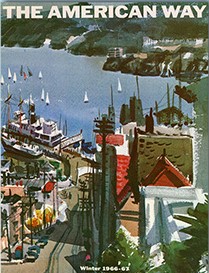

The first-ever in-flight magazine has now become the latest one to fold. American Airlines debuted its seatback publication back in 1966, establishing a precedent that would soon be followed by all the other U.S.-based passenger airlines as well as many foreign carriers.

The American Way (later shortened to American Way) started out as a slender booklet of fewer than 25 pages that focused on educational and safety information about American Airlines, its equipment and staff. Initially an annual publication, American Way soon became a monthly magazine.

Its early success was due to the captive audience that were airline passengers “back in the day.” Unless you brought your own book or periodicals on board, the in-flight magazine was a welcome way to pass the time in lieu of conversing with your seatmates or simply dozing.

As all other American passenger carriers launched their own in-flight magazines, many of them grew to more than 100 pages in length. In their heyday, it’s very likely that the readership levels of these publications outstripped those of many consumer magazine titles.

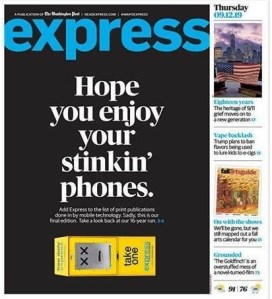

But as with so much else that’s happened in publishing, they were destined to become a casualty of changing consumer behaviors. Interest in leafing through in-flight magazines dropped off when travelers started uploading books, movies and TV shows onto their electronic devices – or tapping into the airlines’ own electronic entertainment options. And when that happened, advertiser interest – the lifeblood of any commercial publication – fell off as well.

American Way’s last issue is this month. Proud to the last, its cover story is about “America’s hippest LGBTQ neighborhoods.” But after June, the magazine will join the in-flight publications that were dropped by Delta and Southwest Airlines during the COVID-19 pandemic and won’t be returning.

To be sure, several of them continue to hang on. United Airlines’ Hemispheres magazine is due back on planes in July, and Virgin Atlantic has plans to relaunch its magazine Vera in September. But these would seem to be in the minority as the other in-flight magazines have disappeared into the ether.

Will they be missed? Travel analyst Henry Harteveldt doesn’t seem to think so, stating recently to USA Today:

“I don’t think frequent travelers – or infrequent travelers – will notice or really care to any great degree if the magazine[s] disappear. Certainly, nobody ever chose an airline because of the in-flight magazine.”

I’m in agreement with Mr. Harteveldt on this. But how about you? Will you be missing in-flight magazines at all?

In recent times, the Harvard Business Review has reported on a so-called “new era” that is emerging in marketing. In an

In recent times, the Harvard Business Review has reported on a so-called “new era” that is emerging in marketing. In an