Quoting Dr. Mark Ritson, is social media “the greatest act of mis-selling in the history of marketing?”

For people who might have wondered if the coronavirus pandemic and the resulting “lockdown culture” that followed would bring more clarity to the debate about the effectiveness of social media, I think it’s safe to conclude that very little has changed in its wake. Many marketing folks continue to suspect that social media may be closer to “all hat, no cattle” than they’d like it to be.

For people who might have wondered if the coronavirus pandemic and the resulting “lockdown culture” that followed would bring more clarity to the debate about the effectiveness of social media, I think it’s safe to conclude that very little has changed in its wake. Many marketing folks continue to suspect that social media may be closer to “all hat, no cattle” than they’d like it to be.

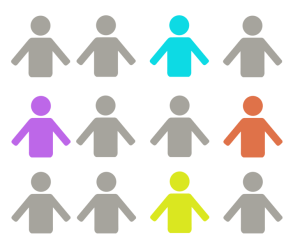

In analyses and evaluations going as far back as a decade, most big companies’ followers on social media have never exceeded 2% to 3% of their brands’ customer base. But the true numbers are even more discouraging, because many brand followers on social media are actually “sleepers” who might have liked a brand in order to participate in a competition, receive a giveaway, or for some other “instant gratification” reason they can’t even recall now.

A more realistic metric is how many people choose to interact with a brand on social media. On that basis, the figures nosedive. Mark Ritson, a brand specialist and professor of marketing who has worked at the London Business School and the University of Minnesota, pegs true engagement at around 0.02% of the people who “like” brands.

Other research points to similarly disappointing metrics regarding social media’s impact on purchasing activities. Adobe finds that only about 1% of its social media interactions end up in a purchase, whereas search marketing, direct website traffic and referrals from other websites are the real drivers in terms of the decision to purchase.

So the dynamics haven’t really budged in recent times. At its core, social media channels enable people to communicate with one another, not with brands. For the kind of brand marketing we routinely see happening on social media, it’s little more than an advertising medium offering inventory like any other advertising business. But those aren’t the reasons why people are on social media in the first place – hence the disconnect.

Contrast those dynamics with organic search and paid search marketing, which come into play when people are searching for answers to questions – often about products and what’s available to purchase. In that regard, any investment in search marketing is money better-spent because it helps keep websites aligned with Google search bots’ way of thinking and judging what content gets shown “first and best” on search engine results pages. Marketers can see the results and judge the customer acquisition costs accordingly.

Over in the social media world, it’s true that the biggest brands can show some “success” in their audience engagement, but it’s likely because they have such a huge brand presence to begin with. That simply isn’t the case with vast majority of companies. For them, the road to commercial success likely doesn’t run through Social Mediaville.

What are your own personal experiences with marketing via social media? Has the reality lived up to the promise? Please share your thoughts and observations with other readers here.

L

L